For as long as computers have existed, physicists have used them as tools to understand, predict and model the natural world. Computing experts, for their part, have used advances in physics to develop machines that are faster, smarter and more ubiquitous than ever. This collection celebrates the latest phase in this symbiotic relationship, as the rise of artificial intelligence and quantum computing opens up new possibilities in basic and applied research

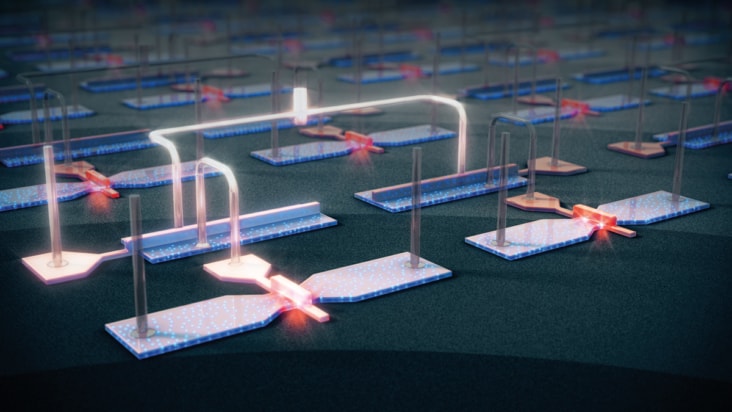

As quantum computing matures, will decades of engineering give silicon qubits an edge? Fernando Gonzalez-Zalba, Tsung-Yeh Yang and Alessandro Rossi think so

Physicist and Raspberry Pi inventor Eben Upton explains how simple computers are becoming integral to the Internet of Things

Physics World journalists discuss the week’s highlights

James McKenzie explains how Tim Berners-Lee's invention of the World Wide Web at CERN has revolutionized how we trade.

Tim Berners-Lee predicts the future of online publishing in an article he wrote for Physics World in 1992

Jess Wade illustrates the history of the World Wide Web, from the technology that enabled it to the staple it is today

Emerging technologies shaping our connected world

Fifth episode in mini-series revisits the birth of the Web and the challenges it now faces

Computing is transforming scientific research, but are researchers and software code adapting at the same rate? Benjamin Skuse finds out

Read article: How scientific models both help and deceive us in decision making

Read article: How scientific models both help and deceive us in decision making

Michela Massimi reviews Escape from Model Land by Erica Thompson

Read article: Particle physicists get AI help with beam dynamics

Read article: Particle physicists get AI help with beam dynamics

New machine learning algorithm accurately reconstructs the shapes of particle accelerator beams from tiny amounts of data

Read article: Climate-change ‘fingerprint’ is identified in the upper atmosphere

Read article: Climate-change ‘fingerprint’ is identified in the upper atmosphere

Observations agree with computer simulations of global warming

Read article: Threshold for X-ray flashes from lightning is identified by simulations

Read article: Threshold for X-ray flashes from lightning is identified by simulations

Research could lead to new types of X-ray sources

Read article: ‘More than Moore’: a glimpse at the future of computing

Read article: ‘More than Moore’: a glimpse at the future of computing

Available to watch now, IOP Publishing explores what lies beyond the era of Moore’s law, and to look at some of the technologies that could play roles in the computers of the future

Read article: ‘Forest of cylindrical obstacles’ slows avalanche flow

Read article: ‘Forest of cylindrical obstacles’ slows avalanche flow

New model of downslope granular movement could reduce the destructive power of avalanches and other dangerous geophysical phenomena

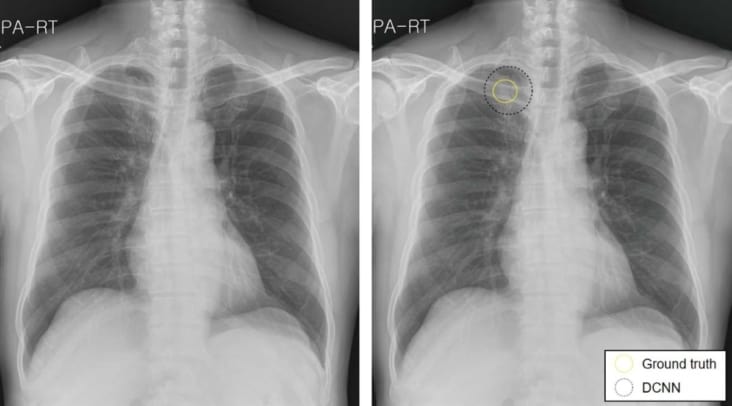

Introducing artificial intelligence into the clinical workflow helps radiologists detect lung cancer lesions on chest X-rays and dismiss false-positives

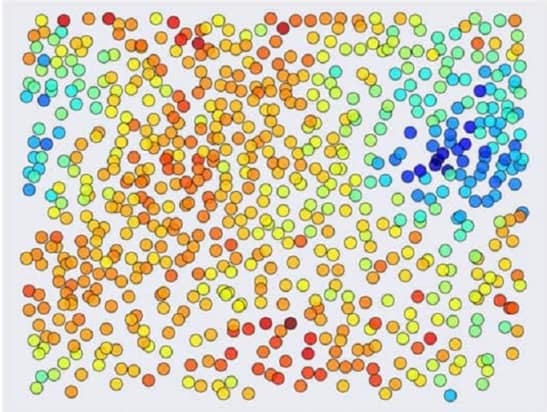

Algorithms help materials scientists recognize patterns in structure-function relationships

A deep learning algorithm detects brain haemorrhages on head CT scans with comparable performance to highly trained radiologists

An artificial intelligence model can identify patients with intermittent atrial fibrillation from scans performed during normal heart rhythm

Proof-of-concept demonstration done using two superconducting qubits

An image-based artificial intelligence framework predicts a personalized radiation dose that minimizes the risk of treatment failure

A machine learning algorithm can read electroencephalograms as well as clinicians

Read article: IBM’s 127-qubit processor shows quantum advantage without error correction

Read article: IBM’s 127-qubit processor shows quantum advantage without error correction

Quantum error mitigation used to calculate 2D Ising model

Read article: Real-time error correction extends the lifetime of quantum information

Read article: Real-time error correction extends the lifetime of quantum information

Researchers perform the first experimental demonstration showing a significant improvement to the lifetime of information in a quantum system, using error correction

Read article: Breakthrough in quantum error correction could lead to large-scale quantum computers

Read article: Breakthrough in quantum error correction could lead to large-scale quantum computers

Errors can be reduced by increasing number of qubits

Read article: Quantum processors still struggle to simulate complex molecules

Read article: Quantum processors still struggle to simulate complex molecules

Latest results show that classical computers retain an edge when simulating quantum chemistry problems – at least for now

Read article: Quantum teleportation opens a ‘wormhole in space–time’

Read article: Quantum teleportation opens a ‘wormhole in space–time’

String theory and quantum gravity could be put to the test by quantum processor

Advances in calibration routines and device fabrication lead to high-fidelity operations

Featuring world-leading journals, news and books, dedicated to supporting and improving research across the field, from fundamental science through to novel applications and facilities.